OpenAI Unveils Child Safety Blueprint to Combat AI-Driven Child Exploitation

Here's what it means for you.

If you're involved in tech, education, or child welfare, this blueprint signals a shift in accountability standards that could reshape your operational landscape.

Why it matters

The blueprint aims to modernize child protection laws in response to the rising threat of AI-enabled exploitation, impacting tech companies and child safety advocates alike.

What happened (in 30 seconds)

- On April 8, 2026, OpenAI released the Child Safety Blueprint to enhance protections against AI-enabled child sexual exploitation.

- The initiative was developed in collaboration with key organizations like the National Center for Missing & Exploited Children (NCMEC) and Thorn.

- It proposes legislative modernization, improved detection protocols, and safety-by-design measures for AI systems.

The context you actually need

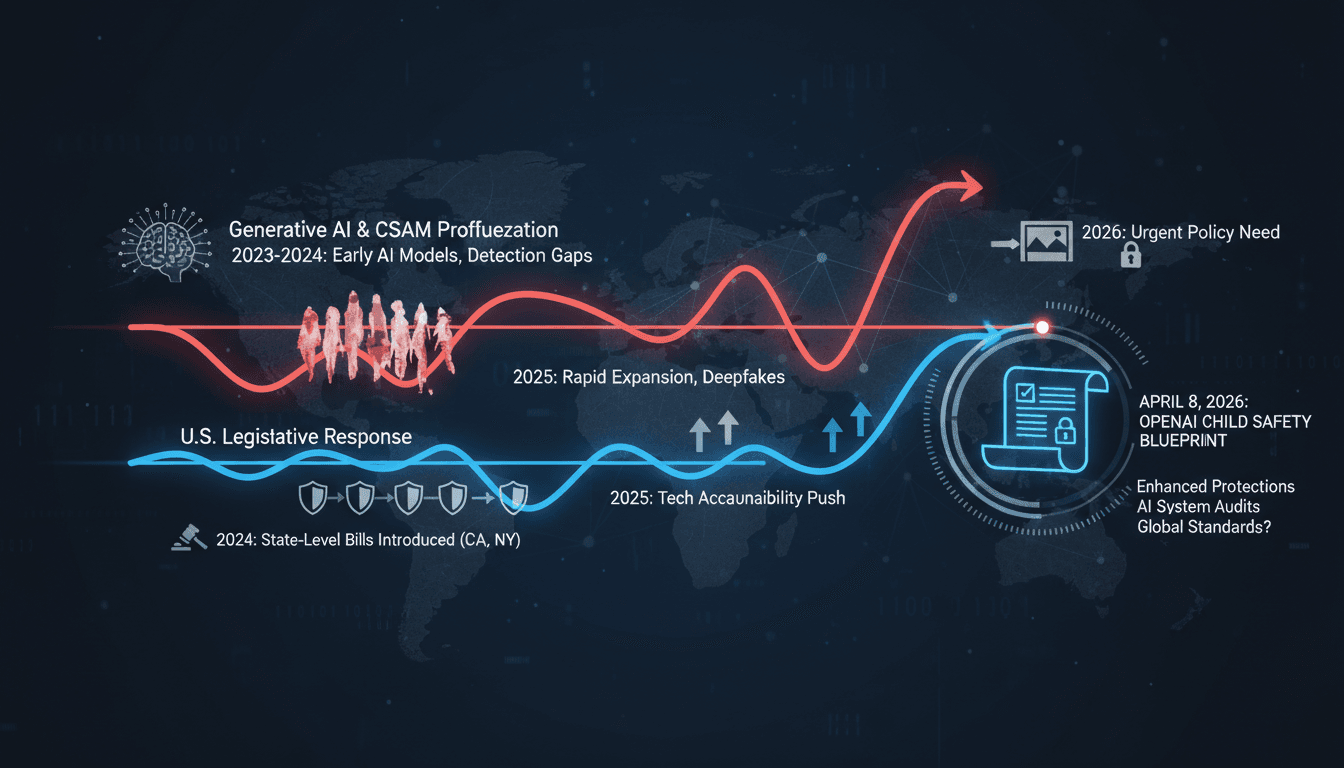

- Generative AI technologies have significantly lowered barriers to producing synthetic child sexual abuse material (CSAM), complicating detection and intervention.

- As of August 2025, 45 U.S. states had enacted laws addressing AI-generated CSAM, reflecting a growing urgency for tech accountability.

- OpenAI's previous collaborations with NCMEC highlighted existing gaps in U.S. child protection laws and operational standards, prompting this comprehensive blueprint.

What's really happening

The release of OpenAI's Child Safety Blueprint is a strategic response to the alarming rise in child sexual exploitation facilitated by generative AI technologies. Since 2023, the proliferation of these technologies has enabled the rapid production of synthetic and altered CSAM, allowing perpetrators to scale grooming operations and evade traditional detection methods. The blueprint outlines a multi-faceted approach to combat this issue, focusing on three core priorities: modernizing existing laws, standardizing reporting protocols, and embedding safety measures into AI systems.

The collaboration with organizations like NCMEC and Thorn underscores the urgency of the situation. These partnerships are not merely symbolic; they reflect a concerted effort to leverage expertise from child protection advocates and legal experts. The blueprint proposes technology-agnostic laws that can adapt to the evolving landscape of AI, ensuring that legislative frameworks remain relevant as new technologies emerge. This is crucial because existing laws often lag behind technological advancements, leaving significant gaps that exploiters can navigate.

Moreover, the emphasis on structured CyberTipline reporting is designed to enhance the quality of information submitted about potential CSAM. By incorporating AI indicators and context into these reports, the blueprint aims to facilitate quicker and more effective responses from law enforcement and child protection agencies. This proactive approach is essential in a landscape where traditional methods of detection are increasingly inadequate.

Safety-by-design measures, such as intent detection and human oversight, are also critical components of the blueprint. By embedding these features into generative AI systems, developers can create tools that are not only innovative but also responsible. This shift towards accountability in AI development is a significant cultural change, reflecting a growing recognition of the ethical implications of technology.

The blueprint's release comes at a time when lawsuits against tech giants for child safety lapses are mounting, indicating a broader push for accountability in the tech industry. As public awareness of these issues grows, companies may face increased pressure to adopt similar safety measures. The Child Safety Blueprint serves as a benchmark for what responsible AI development should look like, setting a standard that could influence global practices.

Who feels it first (and how)

- Tech companies: Increased scrutiny and potential legal obligations regarding AI safety measures.

- Child protection organizations: Enhanced frameworks for reporting and intervention.

- Parents and educators: Greater awareness and resources for safeguarding children online.

What to watch next

- Legislative changes: Monitor state and federal responses to the blueprint, as new laws may emerge to address AI-generated CSAM.

- Industry adoption: Watch how tech companies implement safety-by-design measures in their AI systems, which could set new industry standards.

- Public response: Keep an eye on social media and public discourse surrounding child safety and AI, as this could influence future policy directions.

OpenAI's Child Safety Blueprint has been publicly released and outlines specific recommendations.

Increased legislative activity around AI-generated CSAM in response to the blueprint.

The long-term effectiveness of the proposed measures in reducing child exploitation.

Insights by A47 Intelligence

Curated tech headlines including AI stories.

"Influential aggregator surfacing the day’s top tech/AI links."

— A47 Editor

OpenAI releases the Child Safety Blueprint tackling AI-enabled child sexual exploitation, focusing on updating legislation and improving detection and reporting (Lauren Forristal/TechCrunch)

OpenAI has introduced the Child Safety Blueprint, a strategic initiative aimed at combating AI-enabled child sexual exploitation by advocating for updated legislation and improved detection and reporting mechanisms. This release comes amid rising con...

Startup news with frequent AI coverage.

"Covers launches, funding, and product updates in AI."

— A47 Editor

OpenAI releases a new safety blueprint to address the rise in child sexual exploitation

OpenAI has released a new Child Safety Blueprint aimed at addressing the rising concerns of child sexual exploitation associated with advancements in artificial intelligence. This initiative reflects the company's commitment to enhancing safety measu...