Google Employees Protest AI Contract with Pentagon

Here's what it means for you.

The growing pushback against military AI contracts could reshape tech industry ethics and influence global AI governance.

Why it matters

This employee-led initiative highlights rising concerns over the ethical implications of AI in military applications, potentially affecting tech partnerships and regulations worldwide.

What happened (in 30 seconds)

- On April 27, 2026, over 600 Google employees sent an open letter to CEO Sundar Pichai urging him to reject a proposed classified AI deal with the U.S. Pentagon.

- The signatories, primarily from DeepMind and Google Cloud, cited risks of AI misuse in mass surveillance and autonomous weapons, arguing that safeguards cannot be enforced in classified environments.

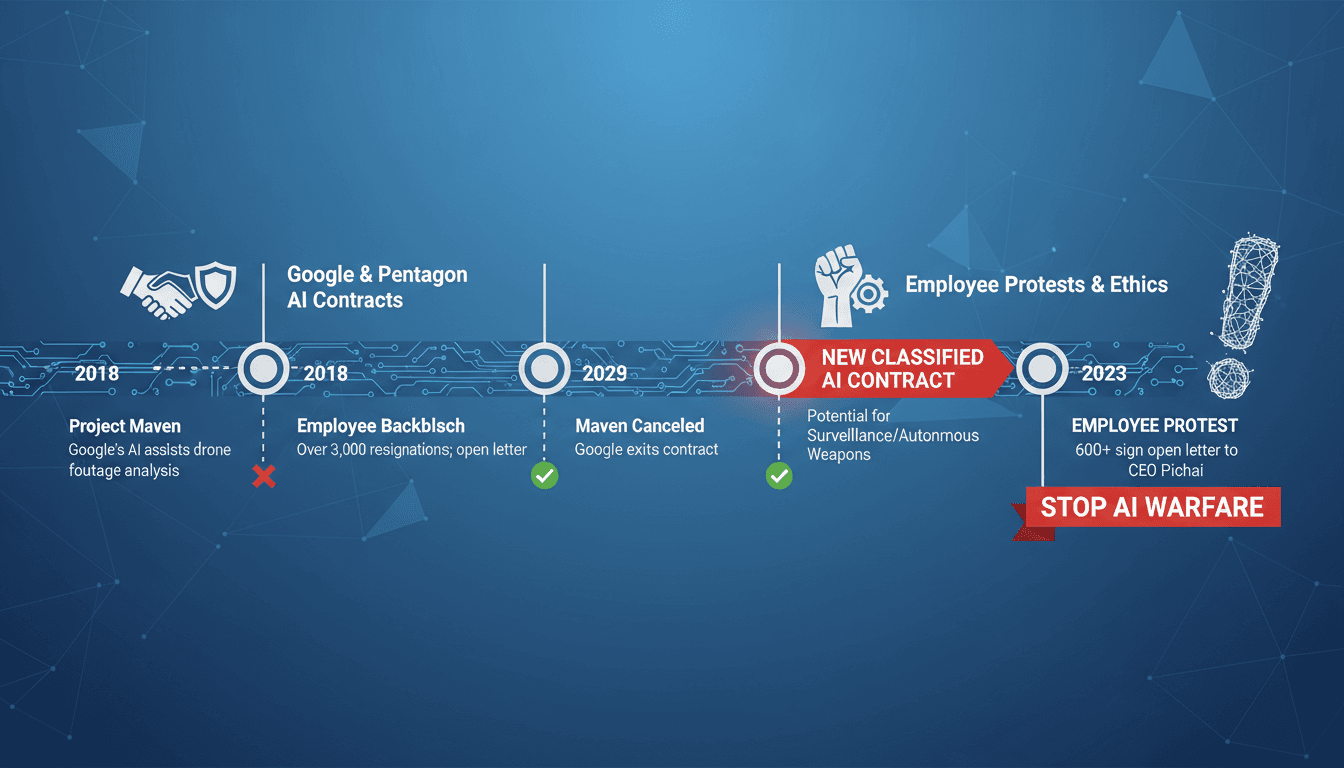

- This action follows a history of employee protests against military contracts, including the notable Project Maven incident in 2018, and comes amid ongoing negotiations for deploying Google's Gemini AI in classified settings.

The context you actually need

- Google's history with military contracts dates back to 2018, when employee protests led to the company exiting the Project Maven contract due to ethical concerns over drone surveillance.

- Recent tensions escalated in February 2026 when Anthropic was excluded from Department of Defense contracts after demanding ethical guardrails, highlighting a growing divide in the tech industry regarding military partnerships.

- Negotiations reported in April 2026 indicated that Google was considering deploying its Gemini AI for classified Pentagon uses, raising alarms among employees about potential ethical and reputational risks.

What's really happening

The recent open letter from Google employees reflects a significant shift in the tech industry's relationship with military contracts, particularly in the realm of artificial intelligence. The protest is not just a reaction to a single contract but part of a broader movement within the tech community advocating for ethical standards in AI development and deployment.

Historically, Google has faced backlash for its involvement in military projects, most notably with Project Maven, which aimed to enhance drone surveillance capabilities using AI. The employee protests at that time were pivotal, leading to Google's decision to withdraw from the contract. Fast forward to 2026, and the landscape has evolved, with more tech companies facing scrutiny over their military ties. The exclusion of Anthropic from Department of Defense contracts due to its demands for ethical safeguards has set a precedent that resonates with Google employees.

The current negotiations for deploying Gemini AI in classified settings have reignited fears about the potential misuse of AI technologies. Employees argue that once AI systems are classified, the ability to enforce ethical guidelines diminishes significantly. This concern is compounded by the fact that AI can be weaponized or used for mass surveillance, raising questions about accountability and transparency.

The implications of this protest extend beyond Google. As more employees in the tech sector voice their concerns, it could lead to a reevaluation of how companies engage with military contracts. This movement may inspire similar actions in other tech firms, creating a ripple effect that could reshape industry standards and practices regarding military AI applications.

Moreover, the protest highlights a growing awareness among tech workers about the ethical ramifications of their work. As AI technologies become increasingly integrated into military operations, the call for ethical boundaries is likely to gain traction, influencing not only corporate policies but also public perception and regulatory frameworks.

In summary, the pushback from Google employees against the Pentagon AI contract is emblematic of a larger cultural shift within the tech industry, where ethical considerations are becoming paramount in discussions about the future of AI and its applications in warfare.

Who feels it first (and how)

- Tech employees: Workers in the tech sector may feel empowered to voice their concerns about ethical practices in their companies.

- Military contractors: Companies that rely on government contracts for AI technologies may face increased scrutiny and pressure to adopt ethical standards.

- Regulatory bodies: Government agencies may need to reassess their partnerships with tech firms and establish clearer guidelines for ethical AI use in military contexts.

What to watch next

- Public response from Google and the Pentagon: Their reactions could set the tone for future military contracts and influence employee sentiment across the tech industry.

- Emerging regulations on military AI: New policies could arise as governments respond to employee protests and public concerns about ethical AI use.

- Industry-wide movements: Watch for potential solidarity actions from employees at other tech firms, which could amplify the call for ethical standards in military AI applications.

Over 600 Google employees have signed an open letter opposing the classified AI deal with the Pentagon.

Increased scrutiny and calls for ethical standards in military AI contracts across the tech industry.

How Google and the Pentagon will respond to the employee protests and what impact it will have on future contracts.

Insights by A47 Intelligence

Capitol Hill news, legislation, and policy insight.

"The Hill specializes in U.S. politics and policy, with a focus on Capitol Hill developments and a reputation for insider reporting."

— A47 Editor

Google workers urge CEO to reject classified AI work with Pentagon

Hundreds of Google employees have urged the company's CEO to reject any potential collaboration with the Pentagon involving classified artificial intelligence projects, citing risks similar to those faced by Anthropic, which was recently banned from ...

Consumer tech and culture with frequent AI coverage.

"Influential tech outlet covering AI products and policy."

— A47 Editor

Google employees ask Sundar Pichai to say no to classified military AI use

Over 600 Google employees have signed a letter urging CEO Sundar Pichai to prevent the Pentagon from utilizing the company's AI models for classified military purposes. The letter's organizers claim that many signers are from Google's DeepMind AI lab...

Tech news, reviews, and analysis of consumer electronics, science, art, and culture.

"The Verge is a technology-focused media outlet known for in-depth reporting, product reviews, and coverage of the intersection between technology and culture."

— A47 Editor

Google employees ask Sundar Pichai to say no to classified military AI use

Over 600 Google employees have signed a letter urging CEO Sundar Pichai to prevent the Pentagon from utilizing the company's AI models for classified military purposes. The letter's organizers claim that many signers are from Google's DeepMind AI lab...

Business and tech news excluding paywalled content.

"High-volume business/tech outlet with frequent AI coverage."

— A47 Editor

Hundreds of Googlers ask their CEO to block classified AI work with the Pentagon

About 600 Google employees urge CEO Sundar Pichai to block classified Pentagon AI deals, citing ethical concerns over military use of AI for weapons.

Opinionated AI coverage for general audiences.

"TNW’s AI vertical covering tools, ethics, and trends."

— A47 Editor

Google’s AI researchers told management to refuse classified military work. Management spent three years making sure it could say yes.

Over 580 Google employees, including senior leaders, have signed a letter urging CEO Sundar Pichai to reject classified military AI projects with the Pentagon, reflecting significant internal dissent regarding the company's direction in military coll...

Curated tech headlines including AI stories.

"Influential aggregator surfacing the day’s top tech/AI links."

— A47 Editor

More than 600 Google employees, including many from DeepMind, sign a letter to Sundar Pichai demanding he bar the DOD from using Google's AI for classified work (Gerrit De Vynck/Washington Post)

Over 600 Google employees, including many from DeepMind, have signed a letter addressed to CEO Sundar Pichai, urging him to prevent the U.S. Department of Defense (DOD) from utilizing Google's AI technologies for classified military purposes. The let...

Technology business news, market impacts, and innovation trends.

"Bloomberg is a premier financial and tech news provider, respected for its in-depth reporting and analytical rigor."

— A47 Editor

Google Staff Urge Pichai to Refuse Classified Military AI Work

Hundreds of AI researchers at Google have signed a letter urging CEO Sundar Pichai to reject the use of the company's artificial intelligence systems for classified military applications, reflecting growing concerns over ethical implications in defen...